Study Finds Exponential Quantum Advantage in Machine Learning Tasks

Summarize this article with:

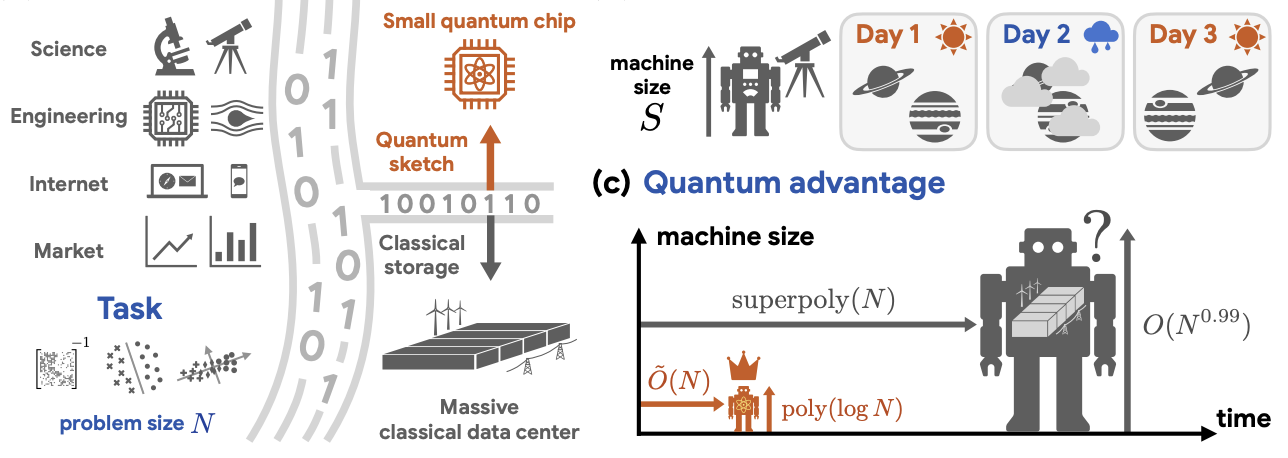

Insider BriefSmall quantum computers could process massive datasets more efficiently than far larger classical systems, according to a study recently posted on arXiv that outlines a path to exponential gains in machine learning and data analysis.The study, conducted by researchers from Caltech, Google Quantum AI, MIT and and Oratomic, reports that quantum systems with relatively few qubits can carry out core data-processing tasks — such as classification, dimension reduction and solving systems of equations — while classical computers would require exponentially more memory to match the same performance. The findings suggest that quantum computing may extend beyond specialized applications and find its way into mainstream data workloads.The work addresses the efficient handling of classical data, which has been a long-standing limitation in quantum computing. Many proposed quantum algorithms rely on storing large datasets in specialized quantum memory, which remains impractical with current technology. According to the researchers, this bottleneck has limited the real-world usefulness of quantum machine learning, despite decades of theoretical progress.The new study introduces a method called “quantum oracle sketching,” which allows a quantum computer to process data streams without storing the full dataset. Instead of loading all data into memory, the system ingests samples one at a time, applies a sequence of small quantum operations and discards each sample after processing. Over time, these operations build a compact representation of the data inside the quantum system.According to the team, this approach allows the quantum system to access classical information in a way that preserves the advantages of quantum computation while avoiding the need for large-scale quantum memory. The method replaces earlier approaches that relied on quantum random access memory, or QRAM, which would require complex and resource-intensive hardware.The researchers combine this data-loading method with a second technique known as classical shadow tomography. This method helps extract useful information from quantum states using a limited number of measurements. Together, the techniques allow the system to produce classical outputs — such as trained models — without needing to reconstruct or store the entire dataset.The study reports simulation results on real-world datasets, including movie review sentiment analysis and single-cell RNA sequencing. In these tests, the quantum approach achieved comparable performance to classical methods while using significantly less memory. According to the researchers, the memory reduction ranged from four to six orders of magnitude, with the quantum system operating with fewer than 60 logical qubits. Important to note: That’s based on simulations and theoretical analysis rather than experiments on physical quantum hardware.These results point to a potential new way in defining quantum advantage. Much of the field has focused on speed — basically, how quickly a quantum computer can solve a problem compared to a classical one. This study instead emphasizes memory, showing that quantum systems may require far less storage to perform the same tasks.The implications are particularly relevant for industries that handle large, high-dimensional datasets. Fields such as genomics, finance and climate modeling often face limits not only in computation time but also in memory capacity. According to the researchers, quantum systems could offer a way to compress and process data more efficiently, reducing the need for large-scale storage infrastructure.The study also presents theoretical results that support the simulations. The researchers show that, for the types of tasks considered, any classical system that matches the quantum system’s performance would need exponentially more memory or significantly more data samples. This separation holds even if classical systems are given unlimited time, indicating that the advantage is tied to how information is stored and processed, not just how quickly computations are performed.The work focuses on three core applications. The first is solving large systems of equations, which appear in engineering, physics and network analysis. The second is classification, a common machine learning task used in applications such as sentiment analysis and fraud detection. The third is dimension reduction, which simplifies high-dimensional data to reveal underlying patterns, as in biological data analysis.In each case, the study reports that a quantum system with a number of qubits that grows slowly with problem size can complete the task using a manageable number of data samples. Classical systems, by contrast, face a trade-off between memory and accuracy, particularly when using streaming methods that process data sequentially.The researchers also examine scenarios where data changes over time, such as shifting user behavior or evolving environmental conditions. In these dynamic settings, the study indicates that classical systems require far more data samples to keep up, while quantum systems maintain efficiency. This suggests a potential advantage for applications that rely on continuously updated data.Despite the results, the study remains largely theoretical. The experiments are based on numerical simulations rather than physical quantum hardware. Current quantum computers are limited by noise, error rates and the difficulty of maintaining stable qubits. Scaling from tens of logical qubits to the hundreds or more needed for practical deployment remains a significant challenge.The study also assumes certain ideal conditions, such as reliable access to data samples and well-behaved datasets. Real-world data can be noisy, correlated and difficult to model. While the researchers indicate that their method can handle these conditions, experimental validation will be needed to confirm performance at scale.Another limitation is the focus on memory rather than runtime. The quantum method still requires processing a large number of data samples, and the time required to perform the necessary quantum operations may reduce some of the practical gains. According to the researchers, the data-loading step dominates the runtime and is unlikely to be significantly reduced without further advances.Integration with existing computing systems presents an additional challenge. Most data-processing pipelines rely on distributed classical infrastructure. Incorporating quantum components into these workflows will require new software tools and system designs.The study outlines several directions for future work. One area is the development of hybrid systems that combine quantum and classical methods, using quantum processors for data compression or feature extraction. Another is the exploration of additional applications, including optimization, signal processing and scientific simulations, where similar advantages may appear.The researchers also point to the need for experimental validation. Demonstrating these effects on real quantum hardware would provide stronger evidence for the claimed advantages and help identify practical constraints. According to the team, such experiments could also serve as tests of quantum mechanics itself, particularly in how physical systems represent large amounts of information.The research team included: Haimeng Zhao and Hsin-Yuan Huang of the California Institute of Technology, with Zhao also affiliated with Google Quantum AI and Huang also affiliated with Oratomic; Alexander Zlokapa of the Massachusetts Institute of Technology; Hartmut Neven, Ryan Babbush and Jarrod R. McClean of Google Quantum AI; and John Preskill of the California Institute of Technology and Oratomic.For a deeper, more technical dive, please review the paper on arXiv. It’s important to note that arXiv is a pre-print server, which allows researchers to receive quick feedback on their work. However, it is not — nor is this article, itself — official peer-review publications. Peer-review is an important step in the scientific process to verify results.Share this article:Keep track of everything going on in the Quantum Technology Market.In one place.